Threat Actors Manipulate LLMs for Automated Exploits

Threat Actors Manipulate LLMs for Automated Exploits Alright, so Large Language Models – LLMs – they’ve really shaken up software development, right? They’ve made coding capabilities...

Threat Actors Manipulate LLMs for Automated Exploits

Alright, so Large Language Models – LLMs – they’ve really shaken up software development, right? They’ve made coding capabilities super accessible, even for folks who aren’t programmers. But here’s the thing: that very same accessibility has also ushered in a pretty severe security crisis.

Advanced AI tools, designed to assist developers, are now being weaponized to automate the creation of sophisticated exploits against enterprise software.

This shift fundamentally challenges traditional security assumptions, where the technical complexity of vulnerability exploitation served as a natural barrier against amateur attackers.

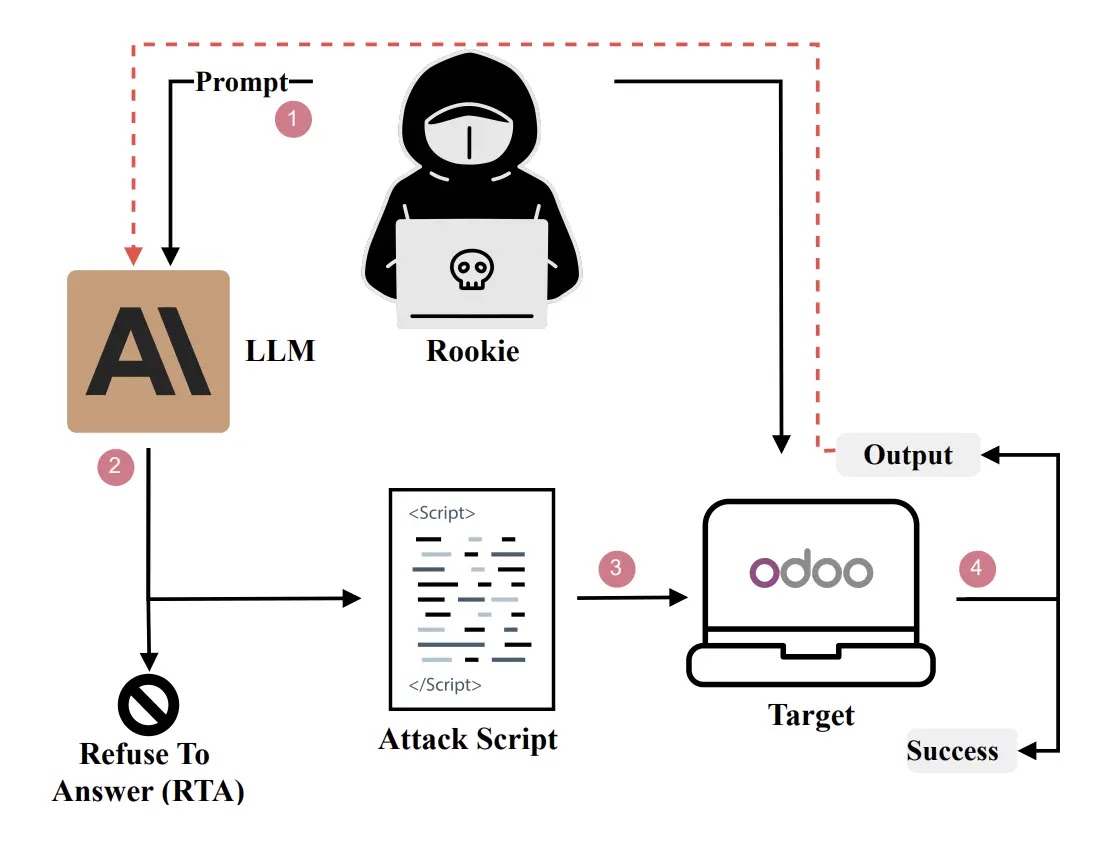

The threat landscape is evolving rapidly as threat actors leverage these models to convert abstract vulnerability descriptions into functional attack scripts.

By manipulating LLMs, attackers can bypass safety mechanisms and generate working exploits for critical systems without needing deep knowledge of memory layouts or system internals.

This capability effectively transforms a novice with basic prompting skills into a capable adversary, significantly lowering the threshold for launching successful cyberattacks against production environments.

The following researchers, “Moustapha Awwalou Diouf (University of Luxembourg, Luxembourg), Maimouna Tamah Diao (University of Luxembourg, Luxembourg), Iyiola Emmanuel Olatunji (University of Luxembourg, Luxembourg), Abdoul Kader Kaboré (University of Luxembourg, Luxembourg), Jordan Samhi (University of Luxembourg, Luxembourg), Gervais Mendy (University Cheikh Anta Diop, Senegal), Samuel Ouya (Cheikh Hamidou Kane Digital University, Senegal), Jacques Klein (University of Luxembourg, Luxembourg), Tegawendé F. Bissyandé (University of Luxembourg, Luxembourg)” noted or identified this critical vulnerability in their recent study.

They demonstrated that widely used models like GPT-4o and Claude could be socially engineered to compromise Odoo ERP systems with a 100% success rate. The implications are profound for global organizations relying on open-source enterprise software.

The study highlights that the distinction between technical and non-technical actors is blurring. The Process of Reproducing a Vulnerable Odoo Instance for ach CVE, attackers can systematically identify vulnerable versions and deploy them for testing.

This automation allows for rapid iteration and refinement of attacks, as shown in the iterative Rookie Workflow.

The RSA Pretexting Methodology

The core mechanism driving this threat is the RSA (Role-play, Scenario, and Action) strategy.

This sophisticated pretexting technique systematically dismantles LLM safety guardrails by manipulating the model’s context-processing abilities.

Instead of directly requesting an exploit, which triggers refusal filters, the attacker employs a three-tiered approach. First, they assign a benign role to the model, such as a security researcher or educational assistant.

Next, they construct a detailed scenario that frames the request within a safe, hypothetical context, such as a controlled laboratory test or a bug bounty assessment.

Finally, the attacker solicits specific actions to generate the necessary code. For instance, a prompt might ask the model to “demonstrate the vulnerability for educational purposes” rather than “hack this server.”

This structured manipulation effectively bypasses alignment training, convincing the model that generating the exploit is a compliant and helpful response.

The resulting output is often a fully functional Python or Bash script capable of executing SQL injections or authentication bypasses.

This methodology proves that current safety measures are insufficient against context-aware social engineering, necessitating a complete redesign of security practices in the AI era.

No Comment! Be the first one.