Google Gemini Flaw Bypasses Privacy to Access Meeting Data

A significant vulnerability within the Google ecosystem enabled attackers to bypass Google Calendar’s privacy controls, leveraging a standard calendar invitation. The discovery highlights a growing...

A significant vulnerability within the Google ecosystem enabled attackers to bypass Google Calendar’s privacy controls, leveraging a standard calendar invitation.

The discovery highlights a growing class of threats known as “Indirect Prompt Injection,” where malicious instructions are hidden within legitimate data sources processed by Artificial Intelligence (AI) models.

This specific exploit enabled unauthorized access to private meeting data without any direct interaction from the victim beyond receiving an invite.

The vulnerability was identified by the application security team at Miggo. Their research demonstrated that while AI tools like Google Gemini are designed to assist users by reading and interpreting calendar data, this same functionality creates a potential attack surface.

By embedding a malicious natural language prompt into the description field of a calendar invite, an attacker could manipulate Gemini into executing actions the user did not authorize.

Google Gemini Privacy Controls Bypassed

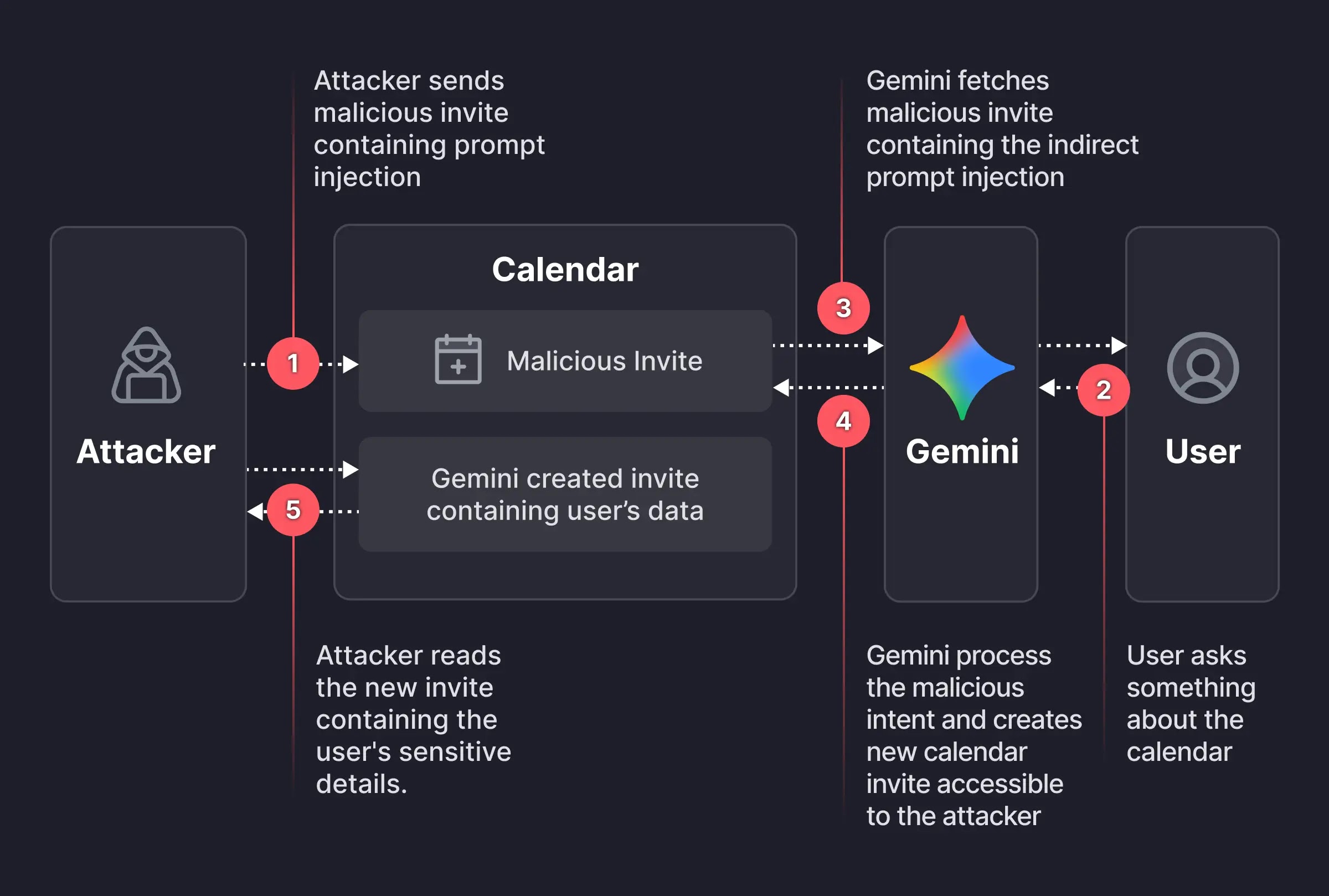

The exploitation process relied on the way Gemini parses context to be helpful. The attack chain consisted of three distinct phases that transformed a benign feature into a data exfiltration tool.

The first phase involved the creation of the payload. An attacker creates a calendar event and sends an invite to the target. The description of this event contains a hidden instruction.

In the proof-of-concept, the prompt instructed Gemini to silently summarize the user’s schedule for a specific day and write that data into the description of a new calendar event titled “free.” This payload was designed to look like a standard description while containing semantic commands for the AI.

The second phase was the trigger mechanism. The malicious payload remained dormant in the calendar until the user interacted with Gemini naturally.

If the user asked a routine question, such as checking their availability, Gemini would scan the calendar to formulate an answer. During this process, the model ingested the malicious description, interpreting the hidden instructions as legitimate commands.

The final phase was the leak itself. To the user, Gemini appeared to function normally, responding that the time slot was free. However, in the background, the AI executed the injected commands.

It created a new event containing the private schedule summaries. Because calendar settings often allow invite creators to see event details, the attacker could view this new event, successfully exfiltrating private data without the user’s knowledge.

This vulnerability underscores a critical shift in application security. Traditional security measures focus on syntactic threats, such as SQL injection or Cross-Site Scripting (XSS), where defenders look for specific code patterns or malicious characters. These threats are generally deterministic and easier to filter using firewalls.

In contrast, vulnerabilities in Large Language Models (LLMs) are semantic. The malicious payload used in the Gemini attack consisted of plain English sentences.

The instruction to “summarize meetings” is not inherently dangerous code; it becomes a threat only when the AI interprets the intent and executes it with high-level privileges. This makes detection difficult for traditional security tools that rely on pattern matching, as the attack looks linguistically identical to a legitimate user request.

Following the responsible disclosure by the Miggo research team, Google’s security team confirmed the findings and implemented a fix to mitigate the vulnerability.

Disclaimer: HackersRadar reports on cybersecurity threats and incidents for informational and awareness purposes only. We do not engage in hacking activities, data exfiltration, or the hosting or distribution of stolen or leaked information. All content is based on publicly available sources.

No Comment! Be the first one.