Google Warns: Hackers Use AI to Create Zero- Using Working

The Google Threat Intelligence Group has published a report detailing the rapid industrialization of generative artificial intelligence within adversarial workflows. This significant finding...

The Google Threat Intelligence Group has published a report detailing the rapid industrialization of generative artificial intelligence within adversarial workflows. This significant finding highlights a concerning shift in cyber threat capabilities.

The most significant finding reveals that a cybercriminal syndicate successfully developed a working zero-day exploit entirely through artificial intelligence assistance. The Python-based exploit was designed to bypass two-factor authentication in a popular open-source web administration tool

According to GTIG’s Q2 2026 findings, cybercrime threat actors collaborated to plan a mass exploitation campaign targeting a popular open-source web-based system administration tool.

The exploit discovered was a Python script that enabled 2FA bypass on the platform, and analysis of the code strongly suggests it was AI-generated.

AI Zero-Day Exploit

Indicators included an abundance of educational docstrings, a hallucinated CVSS score, and a clean “textbook Pythonic” structure characteristic of large language model (LLM) training outputs. GTIG responsibly disclosed the vulnerability to the impacted vendor and disrupted the operation before it could be executed at scale.

The flaw itself was not a memory corruption bug or input sanitization failure, but a high-level semantic logic vulnerability, a hardcoded trust assumption in the 2FA enforcement logic that traditional SAST tools and fuzzers would likely miss.

As the comparison chart below illustrates, frontier LLMs are uniquely capable of identifying exactly this category of high-level logic flaw.

Beyond cybercrime groups, GTIG observed PRC- and DPRK-linked threat actors systematically leveraging AI to discover vulnerabilities at scale.

The group UNC2814 employed expert “persona-driven” jailbreaking, prompting Gemini to act as a senior C/C++ binary security expert to probe TP-Link firmware and OFTP implementations.

APT45 took this further by sending thousands of repetitive, automated prompts to recursively analyze CVEs and validate proof-of-concept exploits, producing an AI-augmented arsenal that would be operationally impractical without AI assistance.

APT27, a PRC-nexus actor, was also observed using Gemini to accelerate development of an operational relay box (ORB) network fleet management application containing hardcoded “maxHops=3” and mobile device types to obfuscate intrusion origins.

PROMPTSPY Malware Powered by Gemini

One of the most alarming discoveries in the report is PROMPTSPY, an Android backdoor first identified by ESET that integrates Google’s Gemini API directly into its execution flow.

PROMPTSPY’s “GeminiAutomationAgent” module serializes the device’s visible UI hierarchy into XML, sends it to Gemini’s gemini-2.5-flash-lite model, and receives structured JSON commands, including CLICK and SWIPE gestures to autonomously navigate the victim’s device without human involvement.

The malware can also capture biometric data, deploy invisible overlays to block uninstallation, and dynamically rotate its C2 infrastructure and Gemini API keys at runtime to evade defender countermeasures. Google has since disabled all assets associated with PROMPTSPY, and no infected apps have been found on Google Play.

Russia-nexus threat actors targeting Ukrainian organizations have deployed AI-enabled malware families, notably CANFAIL and LONGSTREAM, that use LLM-generated “decoy logic” to camouflage malicious functionality.

LONGSTREAM, for instance, contains 32 instances of redundant daylight saving time queries interspersed throughout its code, a pattern designed to appear benign to static analyzers. HONESTCUE interacts with the Gemini API in real time to request just-in-time VBScript obfuscation, defeating signature-based detection dynamically.

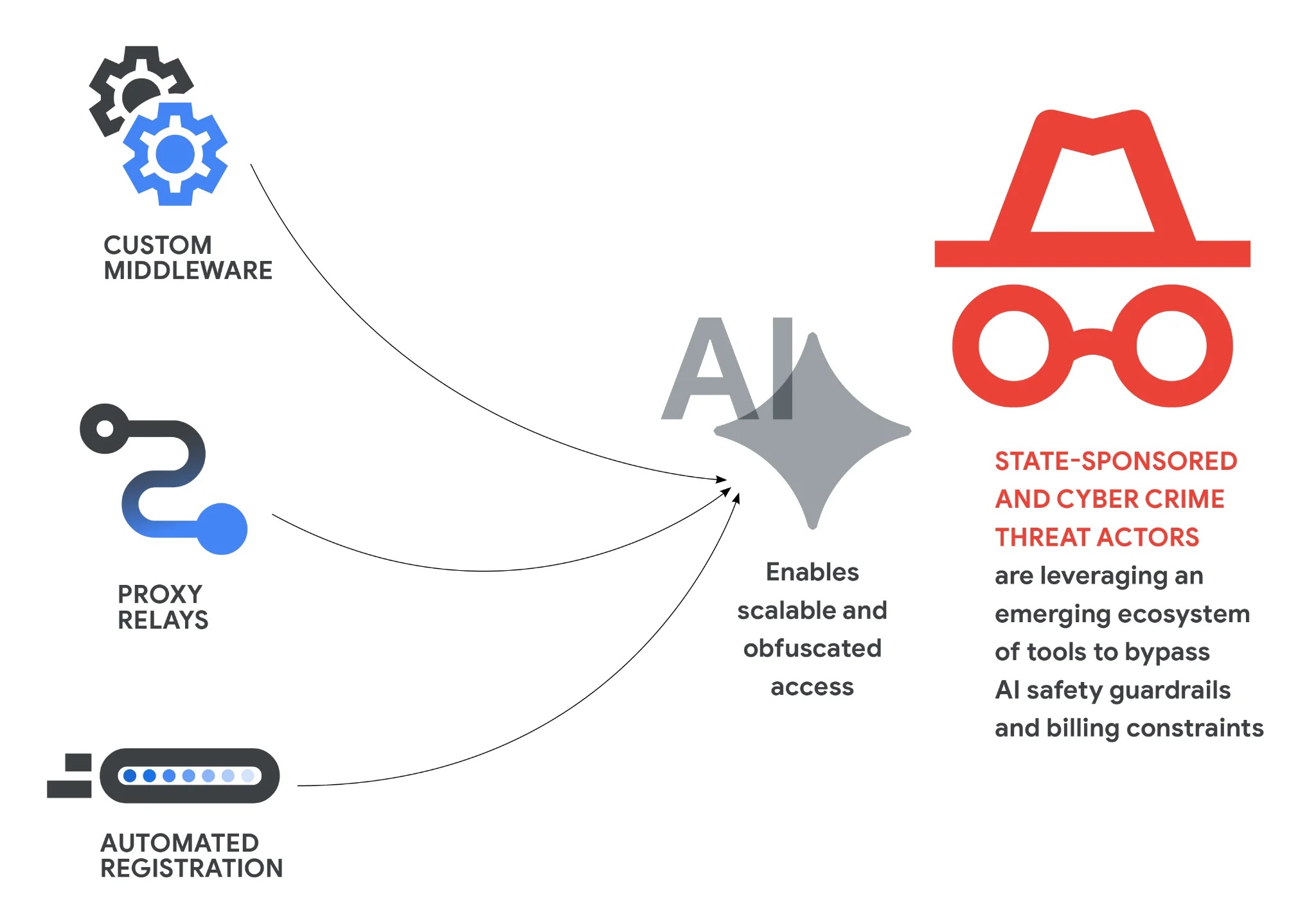

State-sponsored and cybercriminal groups are no longer relying on simple API access — they are building professionalized middleware ecosystems to bypass AI safety guardrails and billing constraints at scale.

PRC-linked UNC6201 was observed using a publicly available GitHub Python script that automates premium LLM account registration, CAPTCHA bypassing, SMS verification, and immediate cancellation to cycle free credits.

UNC5673 deployed tools like “Claude-Relay-Service” and “CLI-Proxy-API” to aggregate and pool multiple Gemini, Claude, and OpenAI accounts simultaneously.

In late March 2026, the cybercrime group TeamPCP (aka UNC6780) executed coordinated supply chain compromises of GitHub repositories linked to the Trivy vulnerability scanner, Checkmarx, LiteLLM, and BerriAI.

The group embedded the SANDCLOCK credential stealer to harvest AWS keys and GitHub tokens directly from CI/CD build environments, then monetized stolen credentials through ransomware and extortion partnerships.

The compromise of LiteLLM, an AI gateway utility used widely to integrate multiple LLM providers, is particularly concerning, as it exposes AI API secrets that threat actors can exploit to pivot into enterprise networks or conduct AI-assisted reconnaissance at scale.

Google is deploying AI offensively in defense as well. The company uses its Big Sleep agent to identify software vulnerabilities and the CodeMender AI agent to automatically patch them.

Gemini’s malicious accounts are actively disabled upon detection, and Google Play Protect automatically guards Android devices against known PROMPTSPY variants.

GTIG’s findings underscore an urgent need for organizations to audit CI/CD pipelines, GitHub tokens, and AI dependency chains as LLM-integrated environments become primary targets for sophisticated adversaries.

Disclaimer: HackersRadar reports on cybersecurity threats and incidents for informational and awareness purposes only. We do not engage in hacking activities, data exfiltration, or the hosting or distribution of stolen or leaked information. All content is based on publicly available sources.

No Comment! Be the first one.