Microsoft AI Generates Commands & Processes Telemetry

Artificial intelligence has reached a critical milestone, now capable of generating attack telemetry that closely mimics real-world threats. This advancement, detailed in a This shift comes at a time...

Artificial intelligence has reached a critical milestone, now capable of generating attack telemetry that closely mimics real-world threats. This advancement, detailed in a

This shift comes at a time when organizations are drowning in logs but still struggle to validate whether their alerts will actually catch a skilled attacker. Traditional testing methods rely on a limited set of scripts, replayed incidents, or handcrafted test cases that rarely capture the creativity of modern threat actors.

Synthetic, AI-generated telemetry offers a way to safely simulate risky behavior without exposing production systems to real malware.

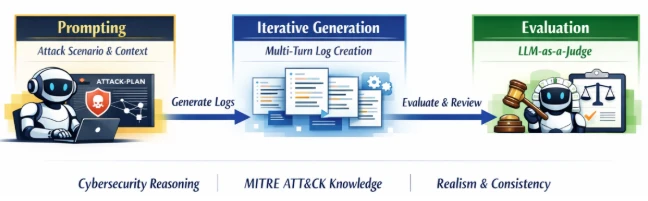

According to researchers at Microsoft, the project focuses on training models that understand how real attacks unfold across command lines, processes, and parent child relationships.

By learning from curated telemetry and red team exercises, the model can propose new sequences of commands that feel plausible, coherent, and context aware inside a specific environment.

AI Can Generate Realistic Command Lines

The result is a stream of test data that pushes existing detections in far more diverse and realistic ways than most manual approaches can manage.

For security teams, the impact is twofold. First, they gain a repeatable way to evaluate analytics before an attacker ever shows up in their logs. Second, they can use the same synthetic scenarios to train analysts, tune triage workflows, and understand how changes to logging or configuration affect visibility over time.

At the heart of the work is using generative models to create commands that match how real tools and operating systems behave, rather than random strings that only look suspicious on paper.

The system considers things like argument order, common administrative patterns, and how one command naturally leads to another during lateral movement or credential theft. This turns raw model output into executable sequences that defenders can safely replay in lab or test environments.

The research also explores building process trees from scratch so that each synthetic command is linked to its parent and child in a realistic way. That matters because many advanced detections rely on unusual process relationships instead of single log lines viewed in isolation. By mirroring these relationships, AI generated telemetry becomes a far better stand in for real attacker behavior.

Importantly, the team highlights guardrails to prevent abuse of the technology. Models are trained and used inside controlled environments, with access scoped to security engineering scenarios rather than public interfaces. The goal is to help defenders practice against believable attack patterns, not to hand over ready made playbooks to threat actors.

What this means for defenders

One of the main promises of this approach is faster, more reliable detection engineering cycles. Instead of writing a rule, waiting weeks to see if it ever fires, and then guessing why it stayed quiet, engineers can immediately barrage their SIEM, endpoint platform, or data lake with synthetic attacks that follow realistic kill chains. This shortens feedback loops and helps teams understand which analytics truly add coverage and which only look good in documentation.

Microsoft’s researchers recommend that organizations start by integrating synthetic logs into isolated environments, where detection content can be iterated quickly without risking noise in production. Over time, teams can schedule controlled “attack exercises” using AI generated command sequences that run alongside normal traffic, all labeled as test activity for safe analysis.

They also stress the importance of pairing these tests with clear success metrics, like time to detection, alert fidelity, and the number of manual steps analysts must take to confirm a finding.

Another key recommendation is to continuously refresh training data and scenarios so that synthetic telemetry evolves with new tradecraft. As adversaries adopt fresh techniques or target new services, defenders should fold those patterns into the data used to guide generation. This helps ensure that models do not get stuck replaying outdated attack styles that no longer reflect current threats.

The research points out that realistic synthetic data can also benefit organizations that lack large volumes of historic incidents. Smaller teams, or those early in their security journey, can still build and validate detections against a wide range of attack behaviors without waiting for actual breaches.

Combined with existing threat intelligence and red teaming, AI generated command lines and process telemetry become another tool that levels the playing field for defenders.

As with any powerful new technique, the benefits come with responsibility. The authors emphasize strong governance around who can generate and run synthetic attacks, where they can be executed, and how the resulting data is labeled and stored. Handled carefully, AI assistance in detection engineering offers a way to turn the complexity of modern logs into an advantage rather than a burden.

Disclaimer: HackersRadar reports on cybersecurity threats and incidents for informational and awareness purposes only. We do not engage in hacking activities, data exfiltration, or the hosting or distribution of stolen or leaked information. All content is based on publicly available sources.

No Comment! Be the first one.