Hackers Use AI Content on Google Discover for Malicious Push

A newly identified threat operation is actively exploiting Analysts at HUMAN’s Satori Threat Intelligence and Research Team identified this operation and this team was led by researchers Louisa Abel,...

A newly identified threat operation is actively exploiting

Analysts at HUMAN’s Satori Threat Intelligence and Research Team identified this operation and this team was led by researchers Louisa Abel, Vikas Parthasarathy, João Santos, and Adam Sell.

They noted that at its peak, Pushpaganda generated approximately 240 million bid requests tied to its domains within a single seven-day window.

The campaign initially targeted users in India before expanding its reach to Australia, the United States, and additional regions.

The research team shared all 113 identified Pushpaganda-associated domains with Google, and Google confirmed that a fix has since been deployed to prevent this type of low-quality, manipulative content from surfacing in Discovery feeds.

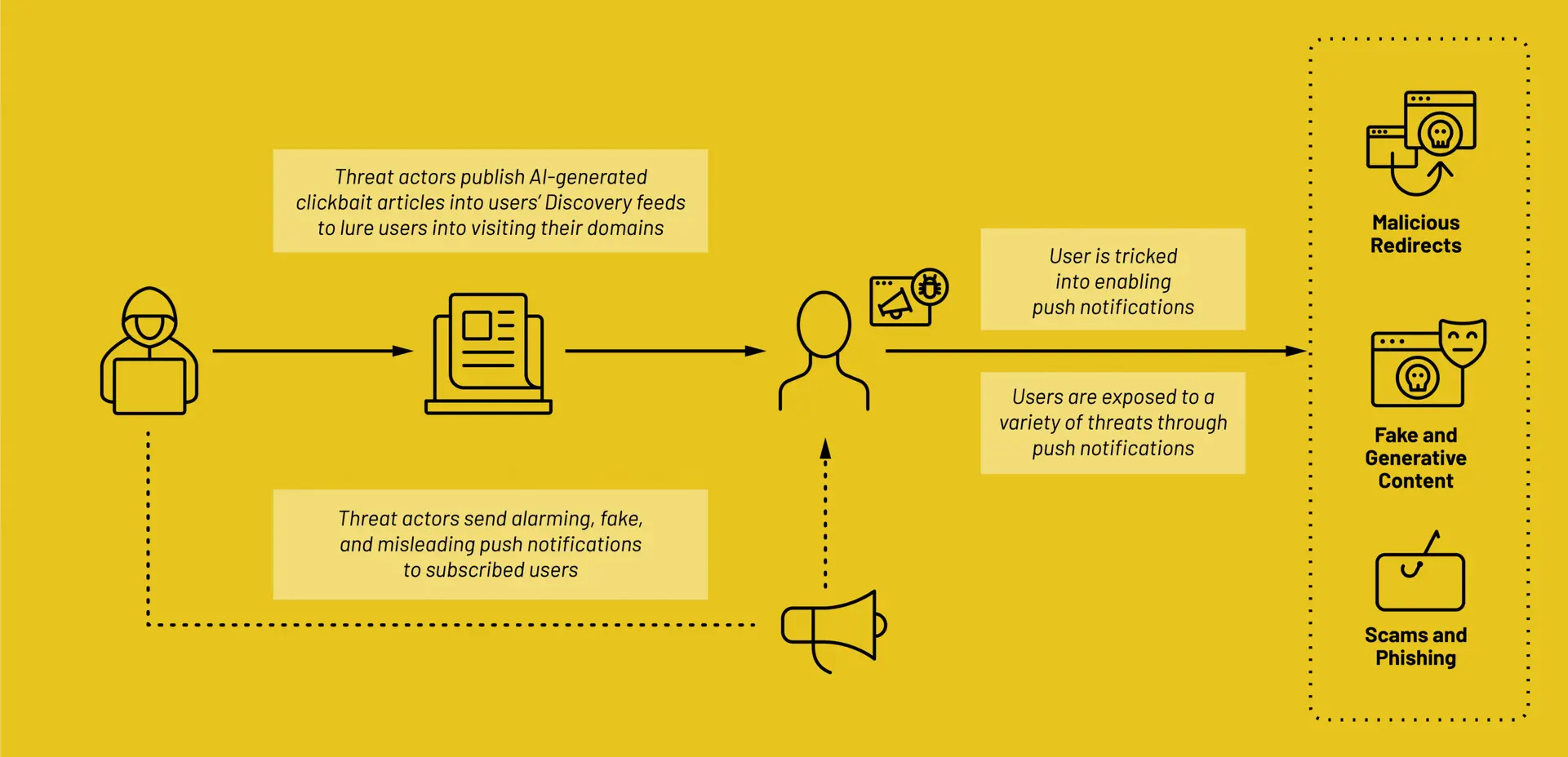

The scale and reach of this operation highlight a growing trend of threat actors weaponizing trusted content distribution platforms.

Since Google’s Discovery feed is a built-in system feature rather than a downloadable app, users have limited control over what appears in it, making it an unusually effective entry point for this kind of social engineering attack.

How the Deceptive UI and JavaScript Rotation Worked

One of the more technically sophisticated elements of Pushpaganda was its use of deceptive interface buttons and a JavaScript-based tab rotation mechanism.

When users visited an actor-controlled domain, they encountered buttons labeled “Apply Now,” “Claim Now,” or “Join WhatsApp” — language that implied a legitimate action.

Rather than completing the advertised function, these buttons used JavaScript to open new browser tabs pointing to additional Pushpaganda-linked domains.

In the background tab left open by the click, a separate JavaScript algorithm took over, rotating the inactive tab through a predetermined cycle of actor-owned pages.

This mechanism quietly loaded ads and extended session durations on those pages, making the sites appear as high-quality traffic sources to advertising networks.

The result was inflated ad revenue for the threat actors — entirely generated from users who never intended to interact with those pages.

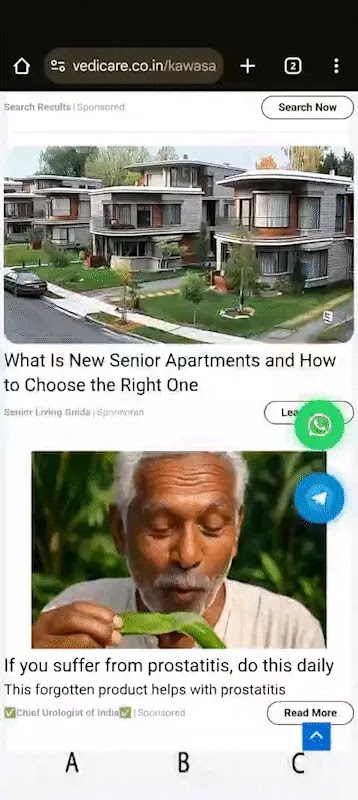

Satori researchers also observed deepfake videos and images embedded in ads on these domains, some falsely depicting well-known celebrities and medical professionals to further exploit user trust at scale.

Users who believe they may have subscribed to Pushpaganda-linked notifications should immediately review their browser notification permissions and revoke access for any unfamiliar or suspicious domains.

On Chrome for Android, this can be done through Settings → Site Settings → Notifications. Users should also avoid clicking “Allow” on notification prompts from websites they do not recognize or trust, especially those reached through news feed links.

From an organizational standpoint, security teams are advised to monitor for unusual push notification subscription activity on managed devices and treat any OS-level alerts mimicking legal or financial authorities as indicators of a social engineering attempt.

Satori researchers continue to monitor for new Pushpaganda-associated domains and any signs of threat actor adaptation, recommending that ad fraud and click fraud detection measures remain active across all web-facing environments.

Disclaimer: HackersRadar reports on cybersecurity threats and incidents for informational and awareness purposes only. We do not engage in hacking activities, data exfiltration, or the hosting or distribution of stolen or leaked information. All content is based on publicly available sources.

No Comment! Be the first one.